Cold Path Storage

Create Data Lake Store and Stream Data from IoTHub using Azure Stream Analytics

Azure Data Lake Store is an enterprise-wide hyper-scale repository for big data analytic workloads. Azure Data Lake enables you to capture data of any size, type, and ingestion speed in one single place for operational and exploratory analytics. Data Lake Store can store trillions of files. A single file can be larger than one petabyte in size. This makes Data Lake Store ideal for storing any type of data including massive datasets like high-resolution video, genomic and seismic datasets, medical data, and data from a wide variety of industries.

Create Azure Data Lake Store

Create a hyper scale data lake store to store IoT Data. Click on Create a resource

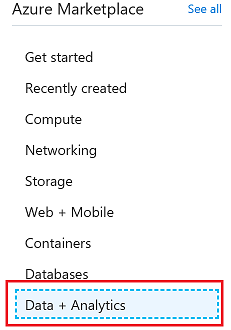

Click on Data + Analytics

Click on Data Lake Store

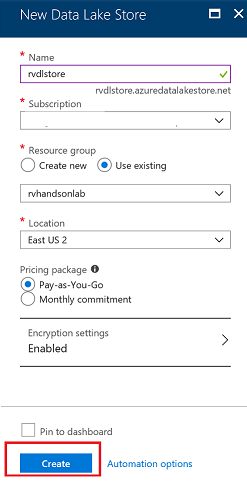

During creation of data lake you have the choice to encrypt the store

Data Lake Store protects your data assets and extends your on-premises security and governance controls to the cloud.

Your data is

- always encrypted

- while in motion using SSL

- at rest using service or user-managed HSM-backed keys in Azure Key Vault.

Single sign-on (SSO), multi-factor authentication, and seamless management of millions of identities is built-in through Azure Active Directory. Authorize users and groups with fine-grained POSIX-based ACLs for all data in your store and enable role-based access controls. Meet security and regulatory compliance needs by auditing every access or configuration change to the system.

Click on Create button

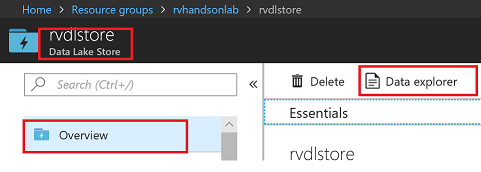

Explore Data in Data Lake Store

Create Folders in Data Lake Store

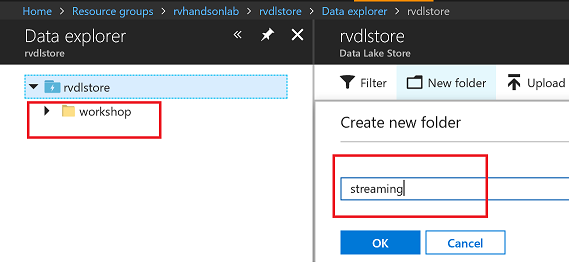

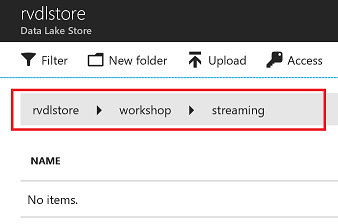

Create /workshop/streaming folder to store Streaming data coming from your device through IoTHub using Stream Analytics Job

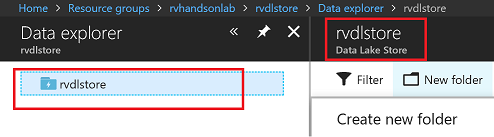

Create /workshop folder

Create /workshop/streaming folder

You should have the folder structure below in place to start streaming data to data lake store

Create Stream Analytics Job

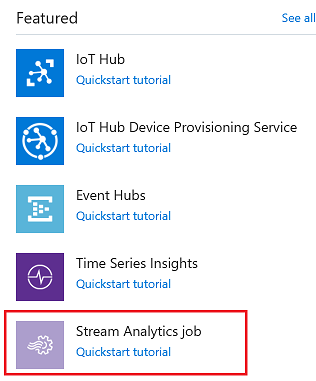

Azure Stream Analytics is a managed event-processing engine set up real-time analytic computations on streaming data. The data can come from devices, sensors, web sites, social media feeds, applications, infrastructure systems, and more

Create a hyper scale data lake store to store IoT Data. Click on Create a resource

Click on Data + Analytics

Click on Stream Analytics Job

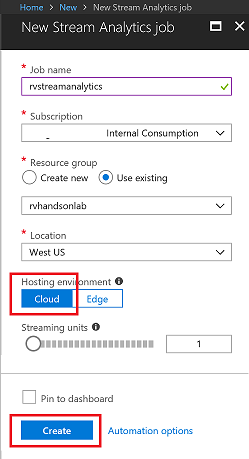

Stream Analytics job cab be created to run on the cloud as well as on the Edge. You will chose to run this on the cloud

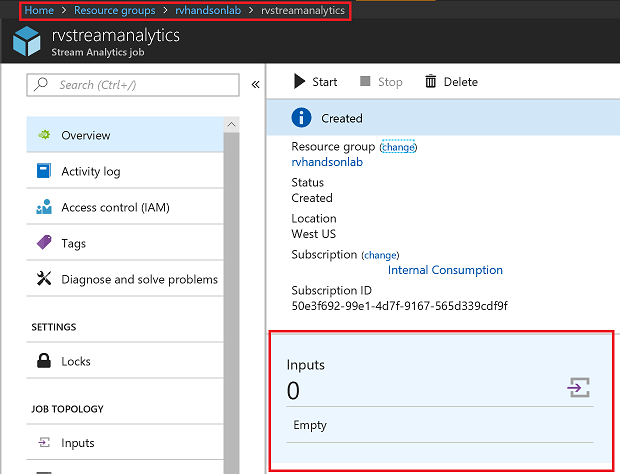

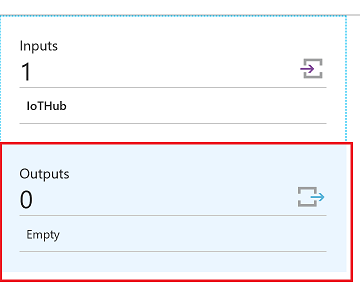

Add Input for Streaming Job

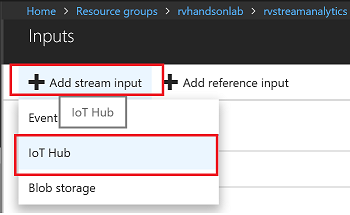

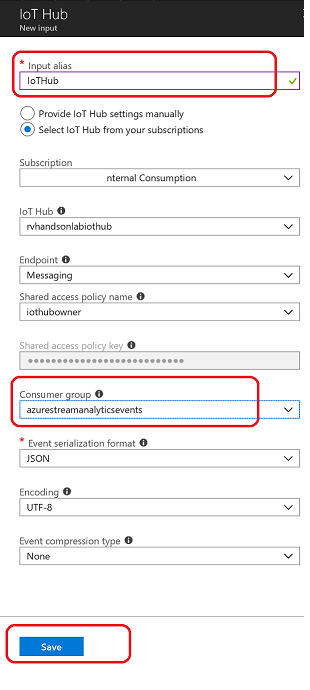

Select IoTHub as Input

Make sure to provide a consumer group. Each consumer group allows up to 5 output sinks/consumers. Make sure you create a new consumer group for every 5 output sinks and you can create up to 32 consumer groups.

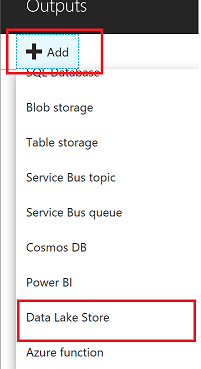

Add Data Lake Store as Output for Streaming Job

Select Data Lake Store as output sink

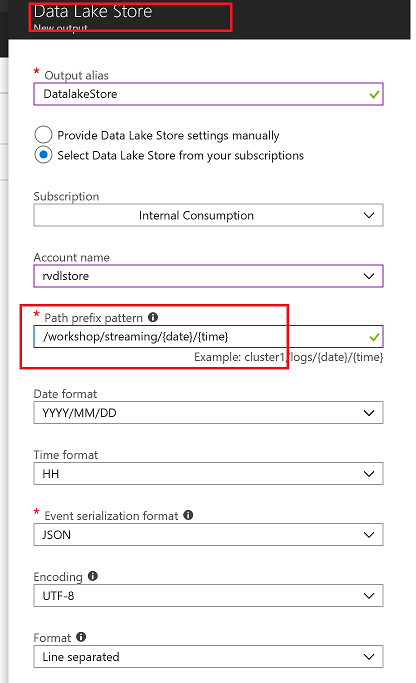

Select the Data Lake Store account you created in previous steps and provide folder structure to stream data to the store

/workshop/streaming/{date}/{time} with Date=YYYY/MM/DD format and Time=HH format will equate to /workshop/streaming/2018/03/30/11 on the store

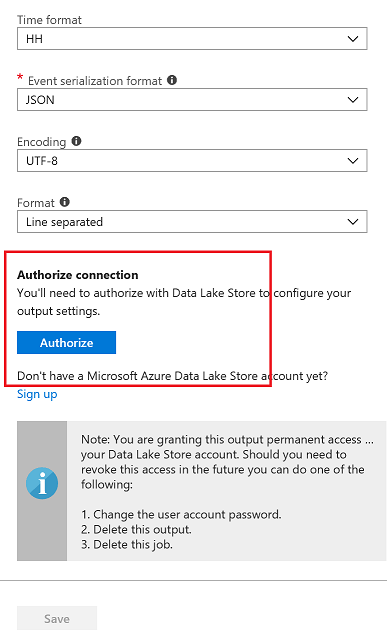

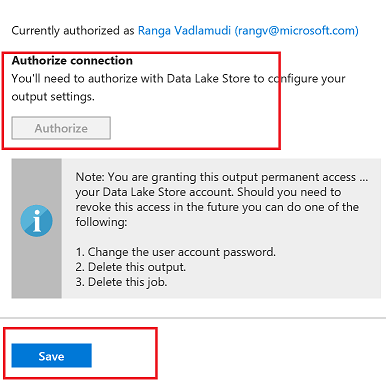

You will have to Authorize data lake store connection for Stream analytics to have access to be able to write to data lake store

- Multi-factor authentication based on OAuth2.0

- Integration with on-premises AD for federated authentication

- Role-based access control

- Privileged account management

- Application usage monitoring and rich auditing

- Security monitoring and alerting

- Fine-grained ACLs for AD identities

You will see a popup and once the popup closes Authorize button will be greyed out after azuthorization is complete. There are exception cases where popup doesnt appear.In this case try again in incognito mode

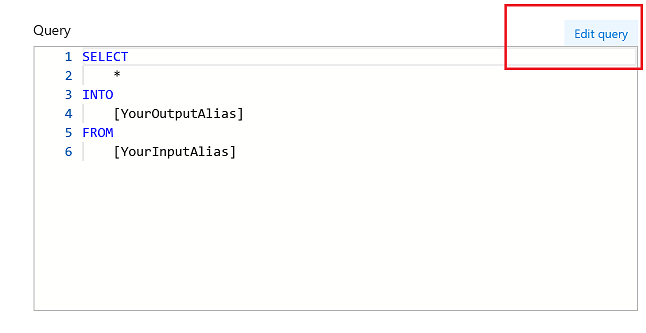

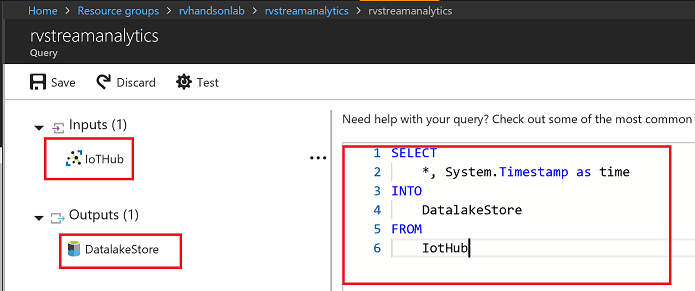

Edit Stream Analytics Query

Edit Query for Streaming Job, Stream Data from IoTHub to Datalake Store

Query

SELECT

*, System.Timestamp as time

INTO

DatalakeStore

FROM

IotHub

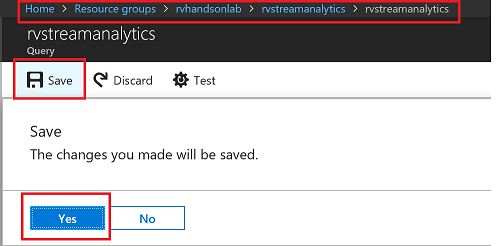

Save the query

Accept by pressing yes

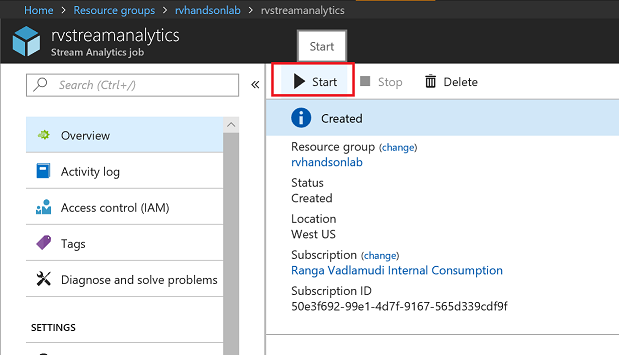

Start Streaming Analytics Job

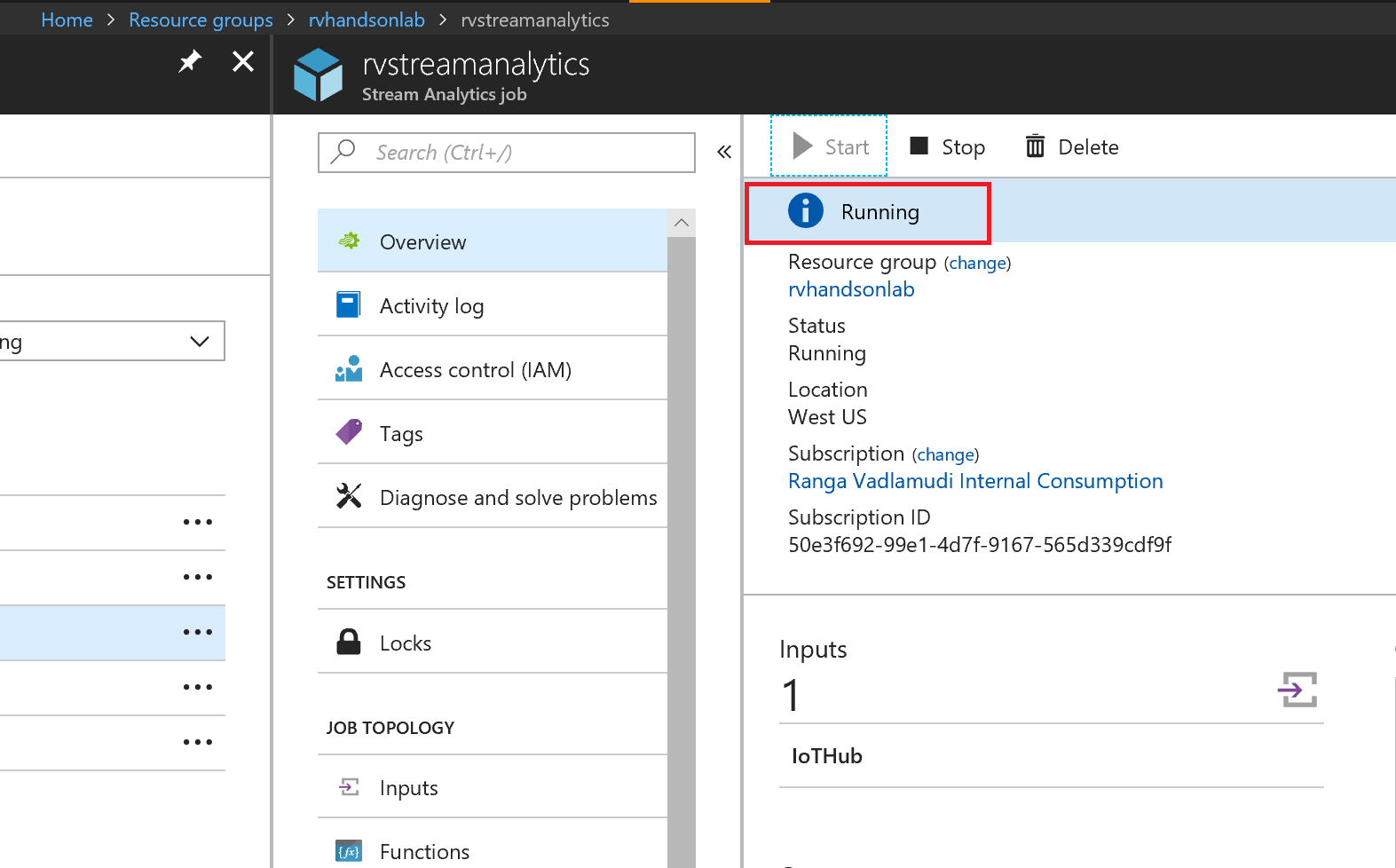

Start the stream job which will read data from IoTHub and store data in Data lake Store

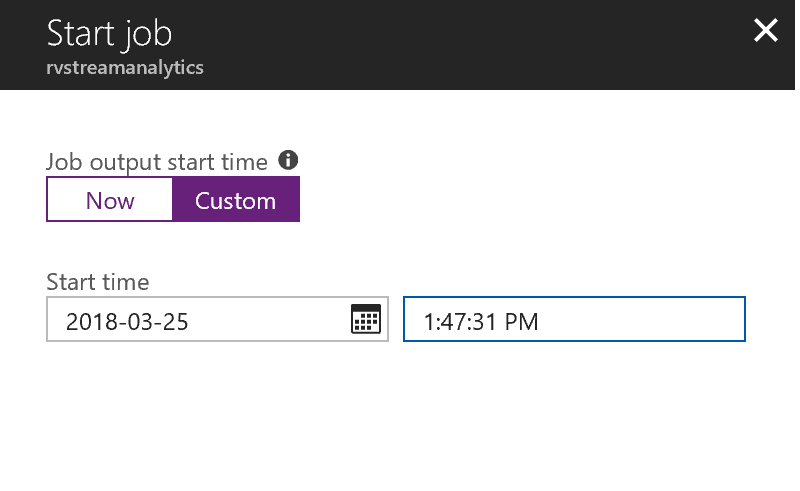

You can pick custom time to go back a few hours to pick up data from when your device has started streaming

Wait till job goes into running state, if you see errors could be from your query, make sure syntax is correct

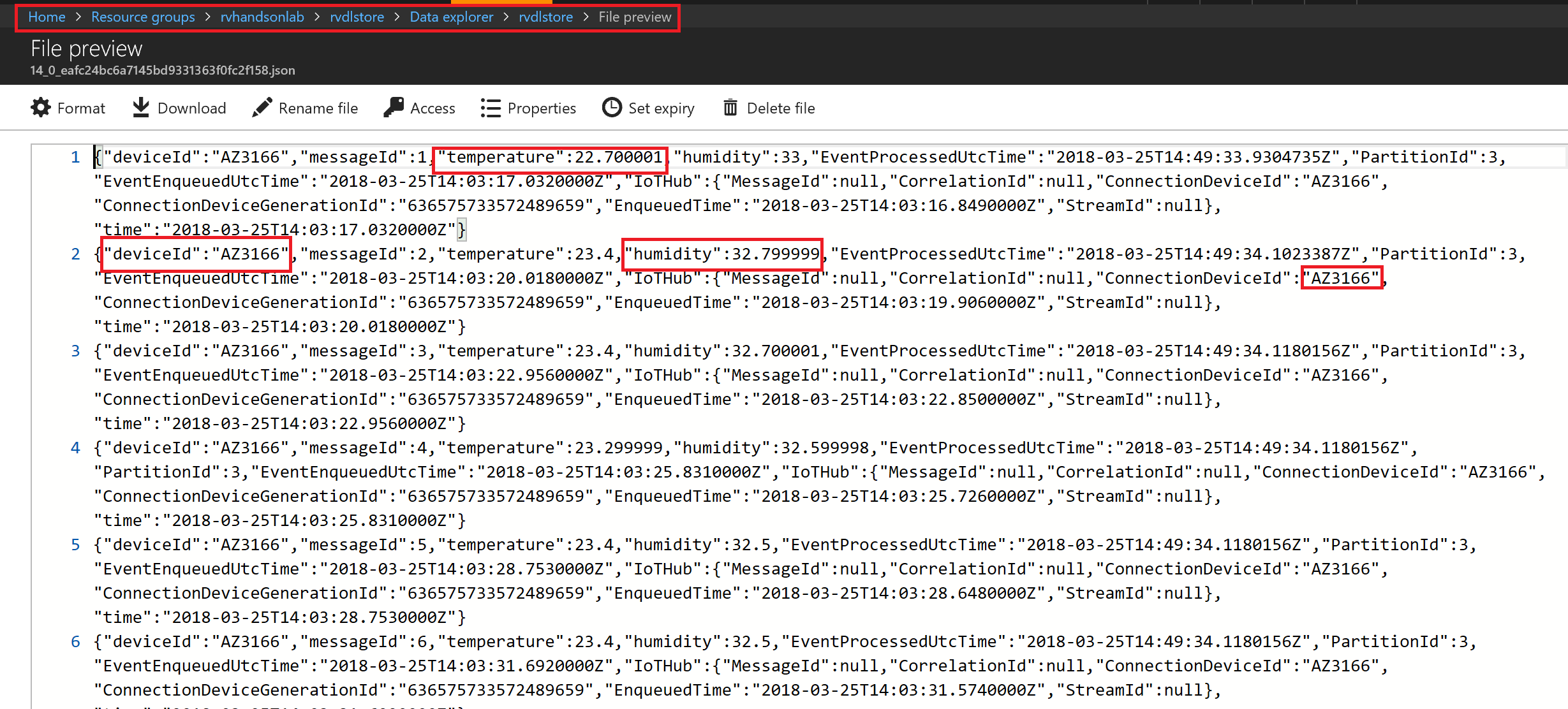

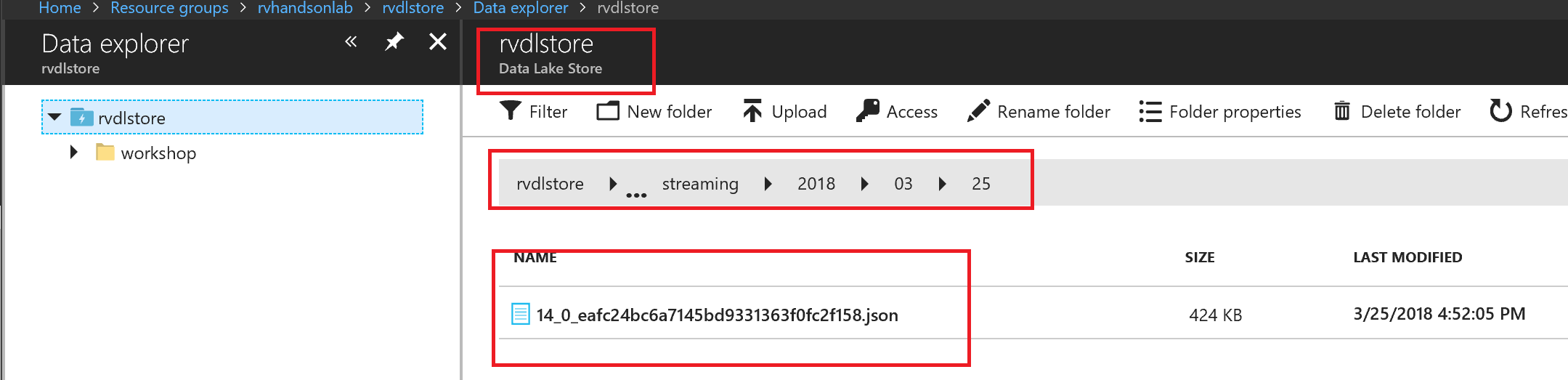

Explore Streaming Data

Go to Data Lake store data explorer and drill down to /workshop/streaming folder.You will see folders created with YYYY/MM/DD/HH format.

You will see json files, with one file per hour, explore the data